Claude

Anthropic

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Read more →

Latest Claude updates

16 published updates

Higher usage limits for Claude and a compute deal with SpaceX

We’ve raised Claude's usage limits and agreed a new compute partnership with SpaceX that will substantially increase our capacity in the near term.

Agents for financial services

We're releasing ten new Cowork and Claude Code plugins, integrations with the Microsoft 365 suite, new connectors, and an MCP app for financial services and insurance organizations.

Building a new enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Claude for Creative Work

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Anthropic Sydney office

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Introducing The Anthropic Institute

We’re launching The Anthropic Institute, a new effort to confront the most significant challenges that powerful AI will pose to our societies.

Latest Claude videos

170 published videos

YouTube

YouTube

Why treat AI models well?

What happens when we're uncertain if AI deserves moral consideration? Anthropic researcher Amanda Askell explains why treating AI models well matters.

YouTube

YouTube

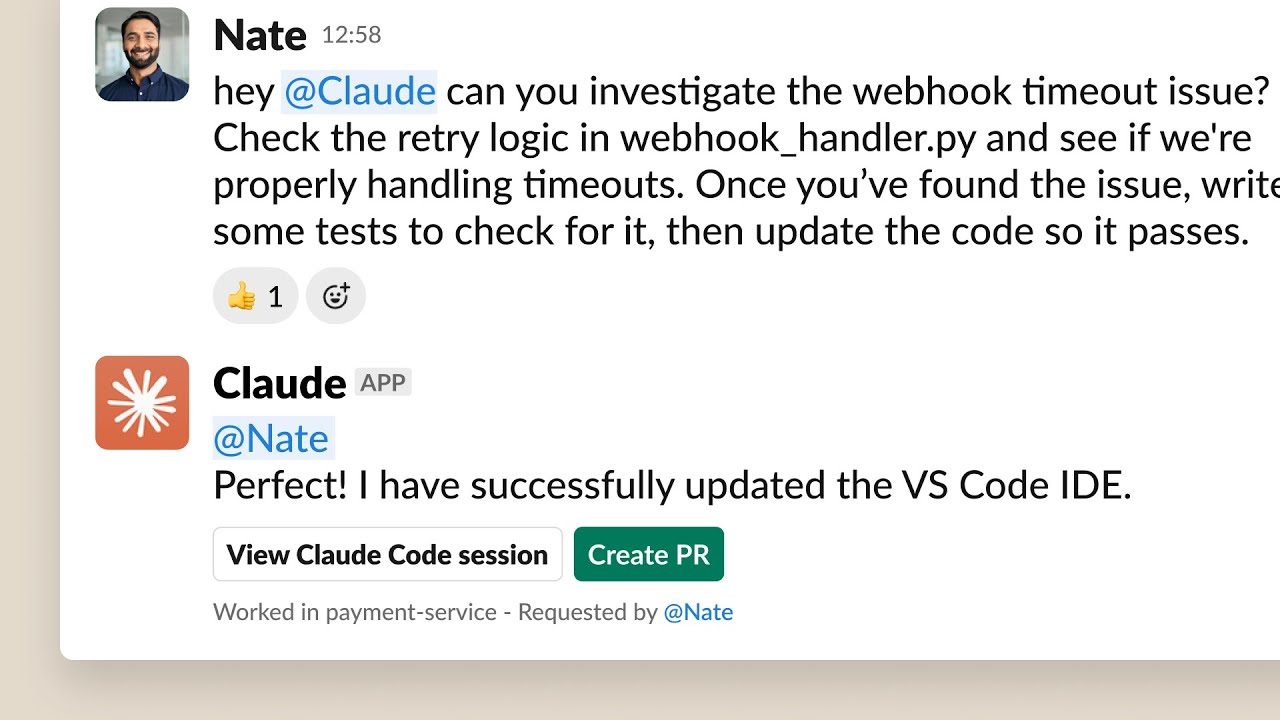

Claude Code in Slack

Delegate tasks to Claude Code directly from Slack, making it easy to move context from Slack conversations to coding sessions. Claude Code in Slack is available now for teams with the Claude app installed in their Slack workspace and who ha...

YouTube

YouTube

How Anthropic uses Claude in Legal

Legal teams lose days per week to routine tasks like contract redlining and marketing reviews. Mark Pike, Associate General Counsel, shares how Anthropic's lawyers uses Claude to build workflows that cut review times from days to hours—no c...

YouTube

YouTube

Anthropic’s philosopher answers your questions

Amanda Askell is a philosopher at Anthropic who works on Claude's character. In this video, she answers questions from the community about her work, reflections and predictions. 0:00 Introduction 0:29 Why is there a philosopher at an AI co...

YouTube

YouTube

AI Fluency for nonprofits course trailer

A trailer of AI Fluency for nonprofits developed by Anthropic and Giving Tuesday. View the full free course, including all videos, exercises, and resources, at https://www.anthropic.com/ai-fluency-for-nonprofits This video is copyright 2...

YouTube

YouTube

Getting started with research in Claude.ai

See how Claude's Research feature transforms how you find and analyze information. This tutorial demonstrates how to use Research for comprehensive, multi-source analysis that would typically take hours of manual work. Learn how to craft ef...

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Their Constitutional AI approach — training models to be helpful, harmless, and honest — has become an industry reference point. Claude is now one of the most widely used AI assistants, recognized for its nuanced reasoning, long context window, and lower tendency for hallucination compared to its peers.

Timeline

Anthropic founded by former OpenAI researchers focused on AI safety.

Claude 1 released; Constitutional AI technique published.

Claude 2 released with 100K context window.

Claude 3 family (Haiku, Sonnet, Opus) launches; major Amazon investment.

Claude 3.5 Sonnet sets new benchmark scores; MCP protocol released.

Claude 4 family released; Claude Code launched as an agentic coding tool.