Claude

Anthropic

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Read more →

Latest Claude updates

16 published updates

Higher usage limits for Claude and a compute deal with SpaceX

We’ve raised Claude's usage limits and agreed a new compute partnership with SpaceX that will substantially increase our capacity in the near term.

Agents for financial services

We're releasing ten new Cowork and Claude Code plugins, integrations with the Microsoft 365 suite, new connectors, and an MCP app for financial services and insurance organizations.

Building a new enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Claude for Creative Work

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Anthropic Sydney office

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Introducing The Anthropic Institute

We’re launching The Anthropic Institute, a new effort to confront the most significant challenges that powerful AI will pose to our societies.

Latest Claude videos

170 published videos

YouTube

YouTube

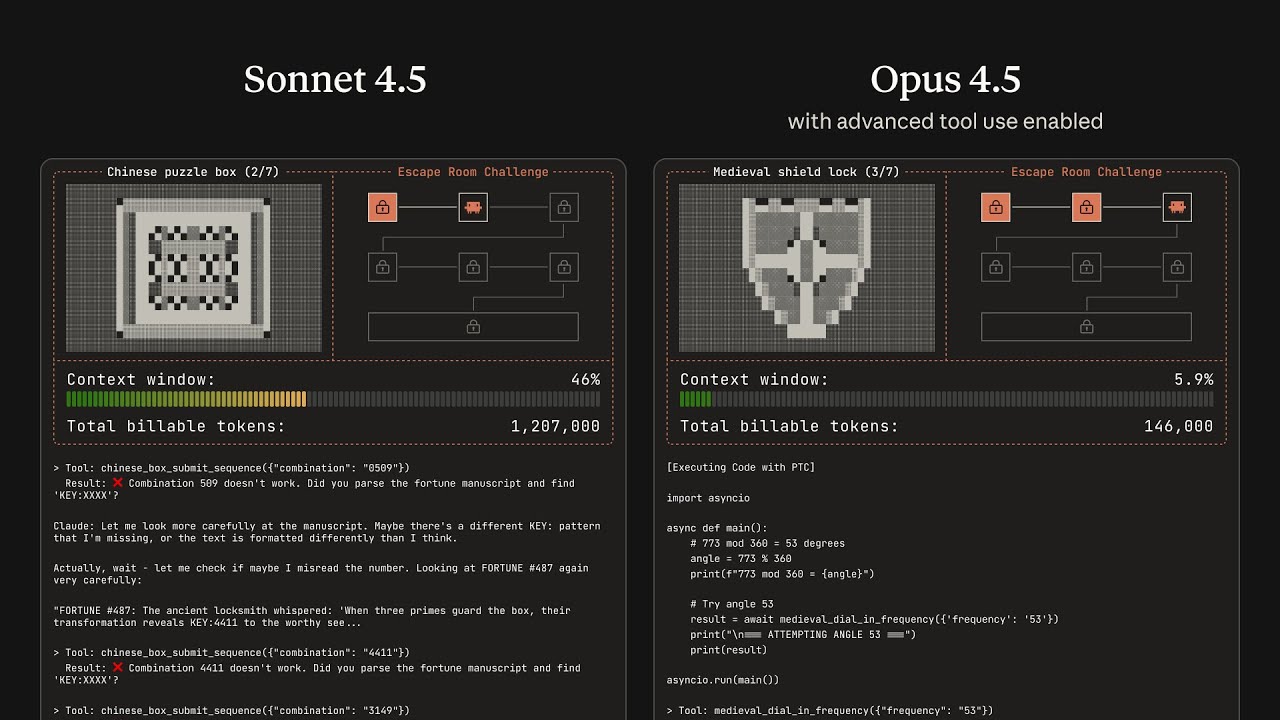

Claude Opus 4.5 solves a puzzle game

Watch Claude complete a puzzle game using new capabilities that enable Claude to take action in the real world—the tool search tool and programmatic tool calling. Together, these updates enable Claude to navigate large tool libraries, chain...

YouTube

YouTube

What is Al "reward hacking"—and why do we worry about it?

We discuss our new paper, "Natural emergent misalignment from reward hacking in production RL". In this paper, we show for the first time that realistic AI training processes can accidentally produce misaligned models. Specifically, when la...

YouTube

YouTube

Turning Claude into your thinking partner

We’ve shipped a lot of new features in Claude over the past few months—memory, voice, file creation, and more. Together, they add up to something bigger: Claude as a thinking partner. Now, Claude isn’t just answering questions but staying w...

YouTube

YouTube

Claude Code modernizes a legacy COBOL codebase

Watch how Claude Code helps modernize a mainframe codebase. Starting with code from an AWS Mainframe Modernization demo environment, Claude Code analyzes business logic, dependencies, and data flows, then helps refactor it into Java while...

YouTube

YouTube

Generating real-time credit intelligence with Claude

Watch Claude go from market signal to investment decision in minutes, generating real-time credit intelligence about Walmart's 2030 bonds tightening before a 2pm portfolio review. In this demo, Claude pulls live bond curves from LSEG, ana...

YouTube

YouTube

Accelerating private equity deal flows with Claude

Watch Claude handle a complete investment banking and private equity deal workflow—from creating a client-ready teaser by pulling financials from Egnyte, to screening the opportunity against investment criteria in SharePoint and building an...

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Their Constitutional AI approach — training models to be helpful, harmless, and honest — has become an industry reference point. Claude is now one of the most widely used AI assistants, recognized for its nuanced reasoning, long context window, and lower tendency for hallucination compared to its peers.

Timeline

Anthropic founded by former OpenAI researchers focused on AI safety.

Claude 1 released; Constitutional AI technique published.

Claude 2 released with 100K context window.

Claude 3 family (Haiku, Sonnet, Opus) launches; major Amazon investment.

Claude 3.5 Sonnet sets new benchmark scores; MCP protocol released.

Claude 4 family released; Claude Code launched as an agentic coding tool.