Claude

Anthropic

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Read more →

Latest Claude updates

16 published updates

Higher usage limits for Claude and a compute deal with SpaceX

We’ve raised Claude's usage limits and agreed a new compute partnership with SpaceX that will substantially increase our capacity in the near term.

Agents for financial services

We're releasing ten new Cowork and Claude Code plugins, integrations with the Microsoft 365 suite, new connectors, and an MCP app for financial services and insurance organizations.

Building a new enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Claude for Creative Work

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Anthropic Sydney office

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Introducing The Anthropic Institute

We’re launching The Anthropic Institute, a new effort to confront the most significant challenges that powerful AI will pose to our societies.

Latest Claude videos

170 published videos

YouTube

YouTube

Translating Claude’s thoughts into language

AI models like Claude talk in words but think in numbers. These numbers, called activations, encode Claude’s thoughts, but not in a language we can read. We are introducing Natural Language Autoencoders, or NLAs, which translate AI models’...

YouTube

YouTube

An initiative to secure the world's software | Project Glasswing

Project Glasswing is a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure th...

YouTube

YouTube

When AIs act emotional

AI models sometimes act like they have emotions—why? We studied one of our recent models and found that it draws on emotion concepts learned from text to inhabit its role as Claude, the AI assistant. These representations influence its be...

YouTube

YouTube

Introducing Claude Opus 4.6

Our smartest model got an upgrade. Claude Opus 4.6 plans more carefully, stays on task longer, and works more autonomously, so you can do more with less back-and-forth. Read more: https://anthropic.com/news/claude-opus-4-6

YouTube

YouTube

Claude on Mars

On December 8, the Perseverance rover safely trundled across the surface of Mars. This was the first AI-planned drive on another planet. And it was planned by Claude. Engineers at NASA Jet Propulsion Laboratory used Claude to plot out the...

YouTube

YouTube

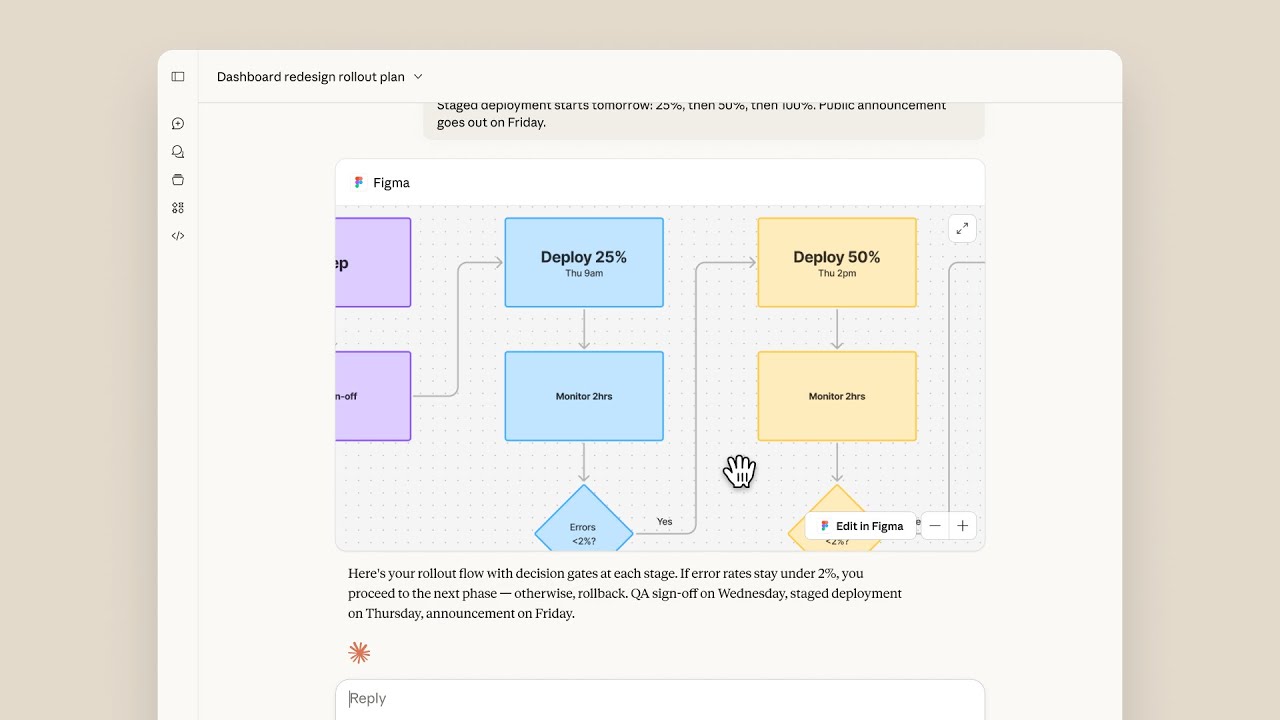

Your tools are now interactive in Claude

Your connected tools are now interactive inside Claude. Manage projects in Asana, draft messages in Slack, build charts in Amplitude, and create diagrams in Figma—without switching tabs.

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Their Constitutional AI approach — training models to be helpful, harmless, and honest — has become an industry reference point. Claude is now one of the most widely used AI assistants, recognized for its nuanced reasoning, long context window, and lower tendency for hallucination compared to its peers.

Timeline

Anthropic founded by former OpenAI researchers focused on AI safety.

Claude 1 released; Constitutional AI technique published.

Claude 2 released with 100K context window.

Claude 3 family (Haiku, Sonnet, Opus) launches; major Amazon investment.

Claude 3.5 Sonnet sets new benchmark scores; MCP protocol released.

Claude 4 family released; Claude Code launched as an agentic coding tool.