Claude

Anthropic

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Read more →

Latest Claude updates

16 published updates

Higher usage limits for Claude and a compute deal with SpaceX

We’ve raised Claude's usage limits and agreed a new compute partnership with SpaceX that will substantially increase our capacity in the near term.

Agents for financial services

We're releasing ten new Cowork and Claude Code plugins, integrations with the Microsoft 365 suite, new connectors, and an MCP app for financial services and insurance organizations.

Building a new enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Claude for Creative Work

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Anthropic Sydney office

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Introducing The Anthropic Institute

We’re launching The Anthropic Institute, a new effort to confront the most significant challenges that powerful AI will pose to our societies.

Latest Claude videos

170 published videos

YouTube

YouTube

The future of agentic coding with Claude Code

Anthropic's Boris Cherny (Claude Code) and Alex Albert (Claude Relations) discuss the current and future state of agentic coding, the evolution of coding models, and designing Claude Code's "hackability." Boris also shares some of his favor...

YouTube

YouTube

Research Preview: Claude for Chrome

YouTube

YouTube

Threat Intelligence: How Anthropic stops AI cybercrime

AI helps people work more efficiently. Unfortunately, this also applies to criminals. We've discovered that our own AI models are being used in sophisticated cybercrime operations, including a large-scale fraud scheme run by North Korea....

YouTube

YouTube

Building and prototyping with Claude Code

Anthropic's Cat Wu (Claude Code) and Alex Albert (Claude Relations) discuss how the Claude Code team prototypes new features, best practices for using the Claude Code SDK and other learnings from building our agentic coding solution alongsi...

YouTube

YouTube

Interpretability: Understanding how AI models think

What's happening inside an AI model as it thinks? Why are AI models sycophantic, and why do they hallucinate? Are AI models just "glorified autocompletes", or is something more complicated going on? How do we even study these questions scie...

YouTube

YouTube

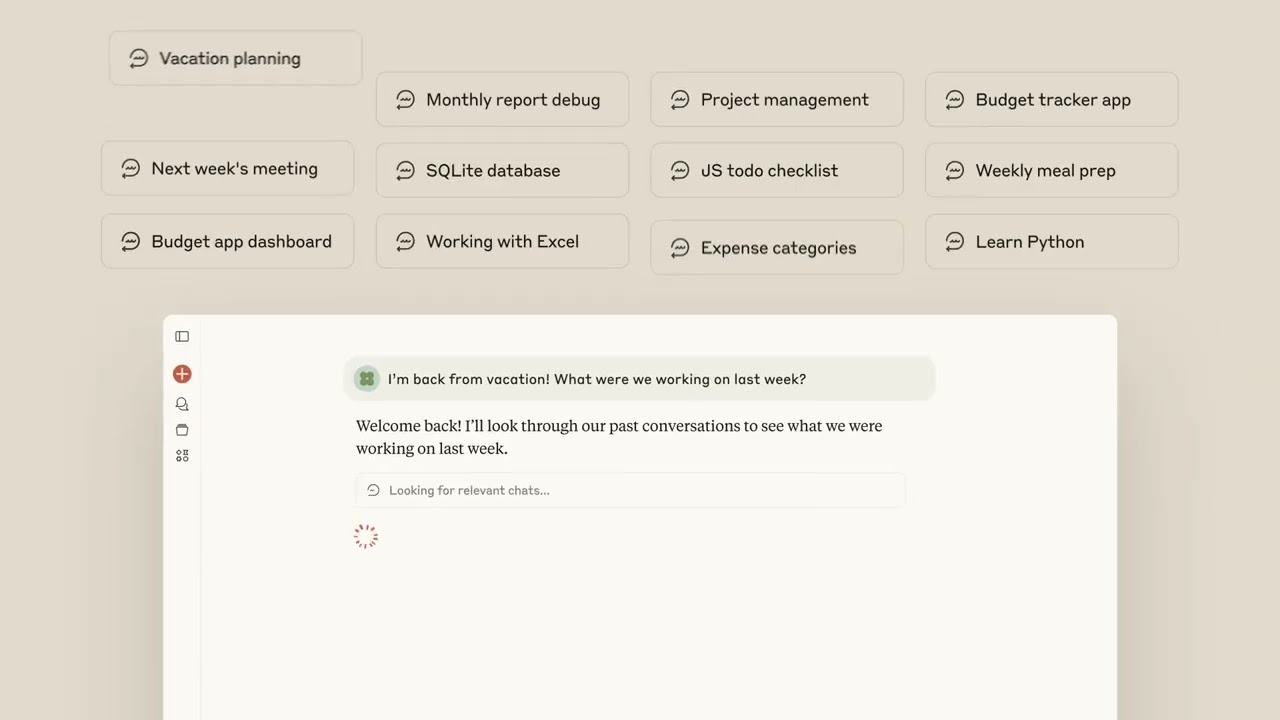

Pick up where you left off with Claude

Never lose track of your work again. Claude now remembers your past conversations, so you can seamlessly continue projects, reference previous discussions, and build on your ideas without starting from scratch every time.

Anthropic was founded in 2021 by former OpenAI researchers who wanted to tackle AI safety as a core engineering discipline, not an afterthought. Their Constitutional AI approach — training models to be helpful, harmless, and honest — has become an industry reference point. Claude is now one of the most widely used AI assistants, recognized for its nuanced reasoning, long context window, and lower tendency for hallucination compared to its peers.

Timeline

Anthropic founded by former OpenAI researchers focused on AI safety.

Claude 1 released; Constitutional AI technique published.

Claude 2 released with 100K context window.

Claude 3 family (Haiku, Sonnet, Opus) launches; major Amazon investment.

Claude 3.5 Sonnet sets new benchmark scores; MCP protocol released.

Claude 4 family released; Claude Code launched as an agentic coding tool.